DynamoDB & System.Text.Json

In the last post of this blog series, I made the case in favor of the various ways that the release of the System.Text.Json library is shaping the latest and greatest in .NET serialization and what that brings to the table for serverless executables in particular.

This one's hands-on

In this post, I'll take this to town: we'll explore a vertical slice of a .NET Standard 2.1 library providing data access to DynamoDB using the latest AWS .NET SDK (v3.x), exploring existing Newtonsoft.Json use cases and replacing them along the way with System.Text.Json suitable equivalents. Before I start though, a kind reminder: this is not a "how-to" guide on migrating from Newtonsoft.Json to System.Text.Json. There are numerous blog posts out there on how to achieve that; however you should be (mostly) good with the official documentation's migration guide; it covers all the basics as well as some more sophisticated use cases:

Setting our carpaccio up

Suppose a data-layer (or entity, if that's your lingo) class modelling a job listing (related: C# 9.0 records can't come soon enough) , like the following:

[DataContract, DynamoDBTable("Jobs")]

public class Job

{

[DataMember, DynamoDBHashKey(AttributeName = "JobId", Converter = typeof(GuidConverter))]

public Guid JobId { get; set; }

[DataMember, DynamoDBRangeKey(AttributeName = "OrganizationId", Converter = typeof(GuidConverter))]

public Guid OrganizationId { get; set; }

[DataMember, DynamoDBProperty(Converter = typeof(EnumConverter<JobType>))]

public JobType Type { get; set; }

[DataMember, DynamoDBProperty(Converter = typeof(EnumConverter<JobStatus>))]

public JobStatus Status { get; set; }

[DataMember, DynamoDBProperty(Converter = typeof(DateTimeConverter))]

public DateTime StartDate { get; set; }

[DataMember, DynamoDBProperty(Converter = typeof(DateTimeConverter))]

public DateTime? EndDate { get; set; }

[DataMember, DynamoDBProperty(Converter = typeof(GenericPropertyConverter<ChangeTracking>))]

public IChangeTracking CreatedBy { get; set; }

[DataMember, DynamoDBProperty(Converter = typeof(GenericPropertyConverter<ChangeTracking>))]

public IChangeTracking ModifiedBy { get; set; }

[DataMember, DynamoDBProperty(Converter = typeof(BoolConverter)]

public bool IsActive { get; set; }

[DataMember, DynamoDBProperty]

public string Interview { get; set; }

}This is a typical example of a class whose sole purpose is to persist and retrieve entities of this type from a DynamoDB table. There are various properties of this class that are .NET System.Runtime objects themselves (like DateTime, enum and Guid) and for that purpose we have custom-built converters that handle the specifics of converting these types from and to DynamoDB primitives. As one might guess from their names though, none of these converters are handling JSON payloads so they are in no way affected by replacing Newtonsoft.Json with System.Text.Json and thus not our focal point today. All, except the very last property called Interview. Now, Interview is a string and stored as such in DynamoDB too. In fact, Interview is a JSON string which, based on business logic requirements, we may or may not want to deserialize to a suitable .NET class:

public class Interview

{

public Question[] Questions { get; set; }

}

public class Question

{

public string Text { get; set; }

public string Attempts { get; set; }

public string ResponseTime { get; set; }

public Video[] Video { get; set; }

public int QuestionId { get; set; }

}

public class Video

{

public string Name { get; set; }

public DateTime Created { get; set; }

public DateTime Modified { get; set; }

public string DirectAccessUrl { get; set; }

public string CdnAccessUrl { get; set; }

public string MimeType { get; set; }

}As we can see, Newtonsoft.JSON doesn't even need JsonProperty annotations to make something as trivial as this work. It will usually ignore case for properties' names and successfully handle the whole deal, obscuring (for better or for worse) the implementation details in the process.

Using Newtonsoft.Json, a bare minimum JsonSerializer would look like this:

public static class JsonSerializer

{

public static string Serialize<T>(T obj)

{

var settings = new JsonSerializerSettings();

settings.Converters.Add(new StringEnumConverter());

return JsonConvert.SerializeObject(obj, settings);

}

public static T Deserialize<T>(string json)

{

var settings = new JsonSerializerSettings();

settings.Converters.Add(new StringEnumConverter());

return JsonConvert.DeserializeObject<T>(json, settings);

}

}

And a naive, optimistic usage of this class would be as simple as:

var interview = JsonSerializer.Deserialize<Interview>(job.Interview);Moving symmetrically backwards and replacing Newtonsoft.Json, a bare minimum JsonSerializer using System.Text.Json would look like this:

public static class JsonSerializer

{

public static string Serialize<T>(T obj)

{

var serializeOptions = new JsonSerializerOptions

{

WriteIndented = true,

AllowTrailingCommas = true

};

serializeOptions.Converters.Add(new JsonStringEnumConverter());

return JsonSerializer.Serialize(obj, serializeOptions);

}

public static T Deserialize<T>(string json)

{

var serializeOptions = new JsonSerializerOptions

{

WriteIndented = true,

AllowTrailingCommas = true

};

serializeOptions.Converters.Add(new JsonStringEnumConverter());

return JsonSerializer.Deserialize<T>(json, serializeOptions);

}

}Without exploring the ins and outs of the JsonSerializerOptions class for now, that's where all the fine tuning for a particular de/serializing operation takes place. Once you realize just how much Newtonsoft.Json was handling behind the scenes (tip: everything you know is a lie), then you will find yourself spending some time with these set of options:

The Interview class we saw above will need it's own set of enhancements before it's able to be successfully de/serialized using this new JsonSerializer class:

public class InterviewContext

{

[JsonPropertyName("intro")]

public Intro Intro { get; set; }

[JsonPropertyName("closing")]

public Closing Closing { get; set; }

[JsonPropertyName("questions")]

public Question[] Questions { get; set; }

}

public class Intro

{

[JsonPropertyName("text")]

public string Text { get; set; }

[JsonPropertyName("video")]

public Video[] Video { get; set; }

}

public class Video

{

public string ProviderName { get; set; }

public string FileId { get; set; }

public string Name { get; set; }

[JsonConverter(typeof(JsonStringToDateTimeConverter))]

public DateTime Created { get; set; }

[JsonConverter(typeof(JsonStringToDateTimeConverter))]

public DateTime Modified { get; set; }

public string DirectAccessUrl { get; set; }

public string CdnAccessUrl { get; set; }

public string MimeType { get; set; }

}

public class Closing

{

[JsonPropertyName("text")]

public string Text { get; set; }

[JsonPropertyName("video")]

public Video[] Video { get; set; }

}

public class Question

{

[JsonPropertyName("text")]

public string Text { get; set; }

[JsonPropertyName("attempts"), JsonConverter(typeof(JsonStringToInt32Converter))]

public int Attempts { get; set; }

[JsonPropertyName("responseTime"), JsonConverter(typeof(JsonStringToInt32Converter))]

public int ResponseTime { get; set; }

[JsonPropertyName("video")]

public Video[] Video { get; set; }

[JsonPropertyName("questionId")]

public int QuestionId { get; set; }

}A couple of things were added there:

JsonPropertyNameis theSystem.Text.Jsonequivalent ofNewtonsoft.JSON'sJsonProperty(you can get away with not adding these if you adjust thePropertyNamingPolicyof yourJsonSerializerOptionsaccordingly) and- we had to add custom converter classes for certain properties.

Let's examine why that was necessary. In the Question class, it turns out that both the Attempts as well as the ResponseTime properties were persisted in the database as either an integer or a string...not exactly a tour de force in data engineering but it is what it is. Newtonsoft.JSON handles that without blinking twice and you wouldn't know but for the rest of us, here's how to write a custom JsonSerializer class that handles both types for a property as such using System.Text.Json:

public class JsonStringToInt32Converter : JsonConverter<int>

{

public override int Read(ref Utf8JsonReader reader, Type typeToConvert, JsonSerializerOptions options)

{

if (reader.TokenType == JsonTokenType.String)

{

int.TryParse(reader.GetString(), out int result);

return result;

}

if (reader.TokenType == JsonTokenType.Number)

{

return reader.GetInt32();

}

throw new JsonException();

}

public override void Write(Utf8JsonWriter writer, int value, JsonSerializerOptions options)

{

writer.WriteStringValue(value.ToString());

}

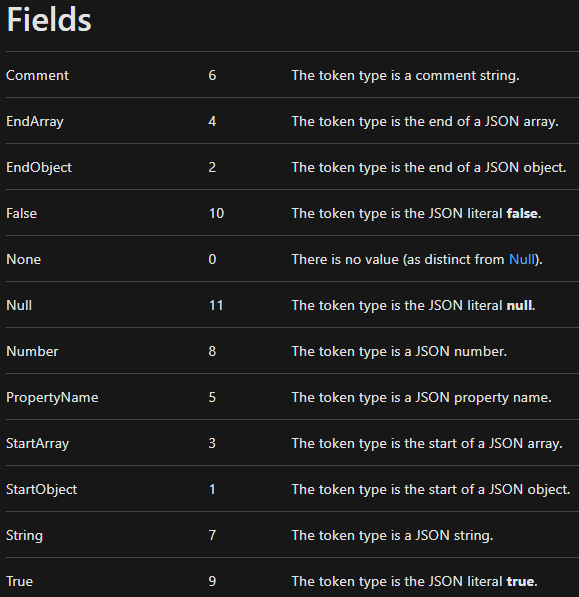

}Now, what's really going on here is that, considering the data predicament as described above, we need to manually account for these two possibilities. Handily, System.Text.Json includes an enum called JsonTokenType in it's namespace that is a representation of the underlying primitive data type (read: not .NET Type) of a particular value. These are the current members of that enum as of .NET Core 3.1:

This is extremely useful as it allows us to write converters tailored for a wide range of scenarios. Every class that implements the abstract JsonConverter<T> class must override the Read and Write methods and the return type of the Read method is constrained to T. The design choices of the .NET team when creating this library allow for some great cases of self-documenting code in our codebases.

Another similarly trivial case is the JsonStringToDateTimeConverter:

public class JsonStringToDateTimeConverter : JsonConverter<DateTime>

{

public override DateTime Read(ref Utf8JsonReader reader, Type typeToConvert, JsonSerializerOptions options) => DateTime.Parse(reader.GetString());

public override void Write(Utf8JsonWriter writer, DateTime value, JsonSerializerOptions options) => writer.WriteStringValue(value.ToString());

}Pretty staightforward, right?

Further reading on how to write JsonConverters that can handle just about any possible scenario can be found in the relevant official documentation article:

Some(what) advanced gotchas

One prevalent point that I made sure to mention in the closing remarks of my previous post too was that System.Text.Json doesn't have feature parity with Newtonsoft.Json, at least not for the time being.

A frequent use case of Newtonsoft.Json is loading a JObject from a JSON string. There are numerous reasons one might want to do that including (but not limited) to resulting object nodes manipulation (add/remove) or simply not having the time or care about type safety and just dynamic everything because it's a throwaway weekend project.

Regardless of the reason, using the Newtonsoft.Json.Linq namespace like:

var dynamoJObject = JObject.Parse(dynamoJsonString);

var dynamoDataPartialClass = dynamoJObject.ToObject<DynamoDataPartialClass>();JObject to a strongly-typed .NET class.So how do we achieve the same result using System.Text.Json instead?

var serializeOptions = new JsonSerializerOptions

{

WriteIndented = true,

AllowTrailingCommas = true,

IgnoreNullValues = true

};

var dynamoJsonDocument = JsonDocumentParser.Parse(dynamoJsonString);

var dynamoDataPartialClass = dynamoJsonDocument.ToObject<DynamoDataPartialClass>(jsonSerializerOptions);This snippet starts by creating a new instance of the JsonSerializerOptions class with some parameterized options, we've covered as much already. So what's that JsonDocumentParser class in the following line?

public static class JsonDocumentParser

{

public static JsonElement Parse(string input)

{

try

{

using var doc = JsonDocument.Parse(input);

return doc.RootElement.Clone();

}

catch (JsonException jsonEx)

{

throw jsonEx;

}

}

public static T ToObject<T>(this JsonElement element, JsonSerializerOptions? options = null)

{

var bufferWriter = new ArrayBufferWriter<byte>();

using (var writer = new Utf8JsonWriter(bufferWriter))

element.WriteTo(writer);

return JsonSerializer.Deserialize<T>(bufferWriter.WrittenSpan, options);

}

}A JsonDocument is a System.Text.Json's class that provides a mechanism for examining the structural content of a JSON value without automatically instantiating data values => less memory allocations! However, there's a subtle catch: observe how JsonDocument.Parse() needs a using statement? This class utilizes resources from pooled memory to minimize the impact of the garbage collector (GC) in high-usage scenarios. Failure to properly dispose this object will result in the memory not being returned to the pool, which will increase GC impact across various parts of the framework.

Furthermore, on the subsequent line, there's a RootElement.Clone() method. A RootElement is typeof(JsonElement) and as you might deduce it's the root element of a JSON document. The Clone() method returns a copy of this JsonElement that can be safely stored beyond the lifetime of the original JsonDocument.

Finally, a JsonElement is a struct that represents a specific JSON value within a JsonDocument. Using this approach, practically every node within a JSON object is a JsonElement as well as the whole JSON object itself.

Outro

This turned out somewhat longer than what I'd originally planned but I felt I should point out as much as possible for people pondering over the specifics of making this move. It's overall not an easy thing to do; it will inevitably cost development time and you might still encounter unforeseen conversions (especially with JSON payloads coming from third-party providers) even after extensive testing. This actually happened to us on a production environment, it was a fun day.

Hopefully this goes some way into helping some of you see beyond the migration veil into the promised land of performance benefits (as highlighted on the previous blog post of this series).

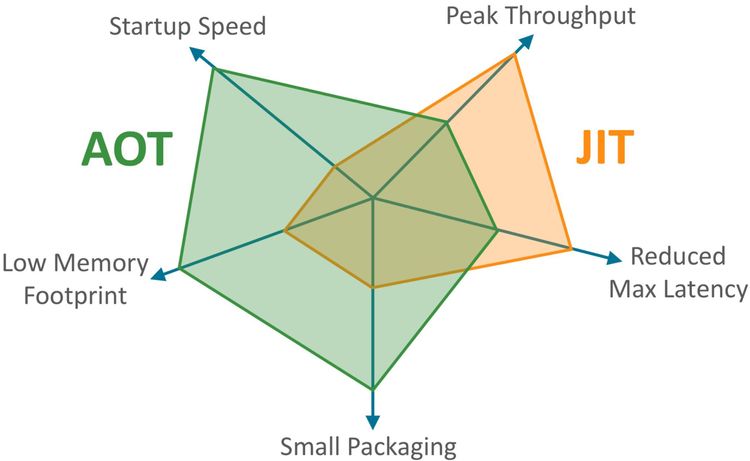

Next time round for the final entry in this series, we'll cover the new ReadyToRun .NET Core feature and how to ride the new wave of Ahead Of Time compiling (AOT) in AWS Lambda .NET projects to reap that sweet performance nectar.